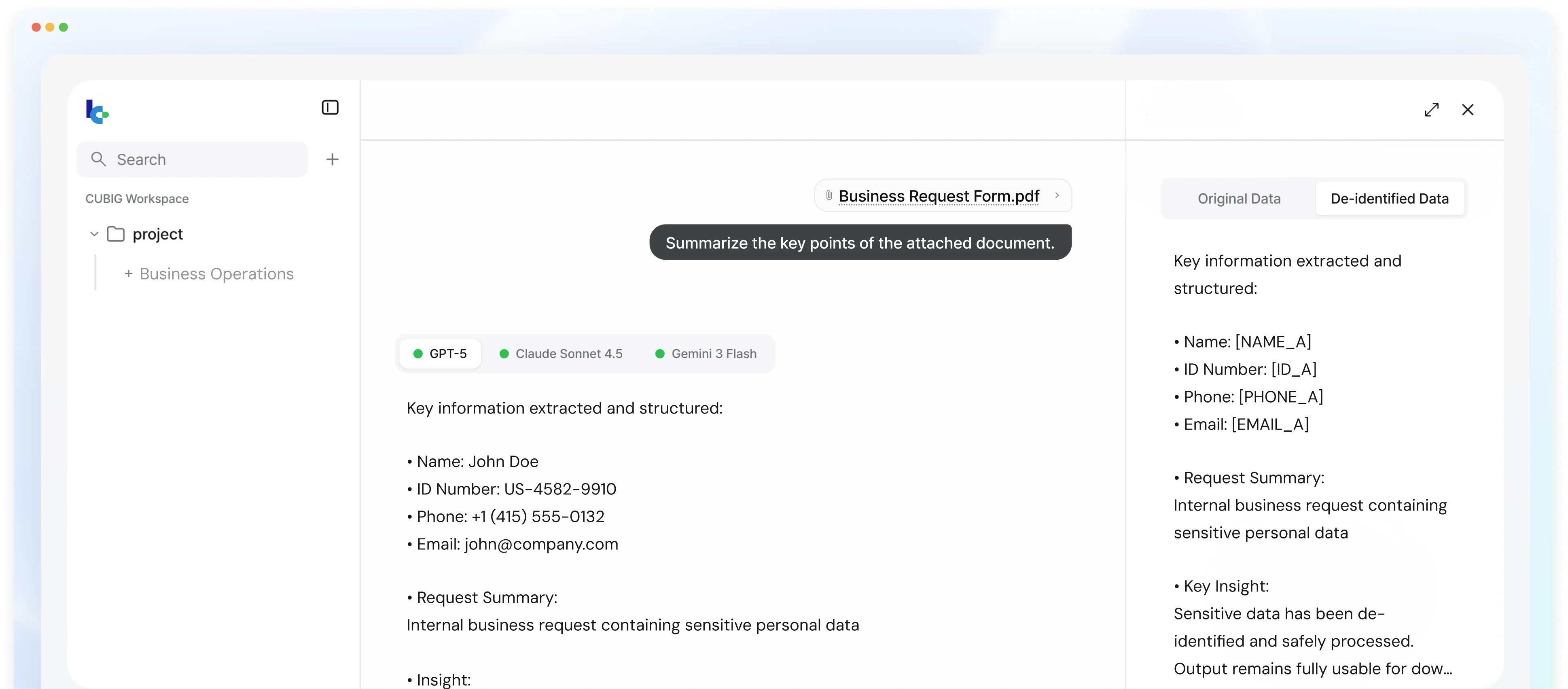

Use any AI on your real documents — without exposing a single line

Your sensitive documents go through LLM Capsule before reaching AI. Confidential names, figures, and terms are replaced locally — AI processes the safe version — then results are restored with your original data. Each organization defines what counts as sensitive.

Trusted by enterprises processing sensitive documents

across finance, insurance, legal, healthcare, and telecom

Information Security Fast Track

Information Security Fast Track

GS Certification

GS Certification

ISO/IEC 27001

ISO/IEC 27001

ISO/IEC 42001

ISO/IEC 42001

Security Innovation Award

Security Innovation Award

Startup World Cup

Startup World Cup

Next Rise Global Innovator

Next Rise Global Innovator

T Challenge 2026

T Challenge 2026

AI Medical Innovation

AI Medical Innovation

Emerging AI+X Top 100

Emerging AI+X Top 100

Gartner Vendor

Gartner Vendor

Information Security Fast Track

Information Security Fast Track

GS Certification

GS Certification

ISO/IEC 27001

ISO/IEC 27001

ISO/IEC 42001

ISO/IEC 42001

Security Innovation Award

Security Innovation Award

Startup World Cup

Startup World Cup

Next Rise Global Innovator

Next Rise Global Innovator

T Challenge 2026

T Challenge 2026

AI Medical Innovation

AI Medical Innovation

Emerging AI+X Top 100

Emerging AI+X Top 100

Gartner Vendor

Gartner Vendor

Five capabilities that remove every barrier to enterprise AI

Other tools either block AI usage or destroy document context. LLM Capsule solves both — here's how.

Your data never leaves

Security team blocking AI adoption? With zero exposure, AI only sees safe placeholders. Even if the LLM provider logs everything, zero enterprise data is exposed.

Get real results back

AI outputs auto-restore with your original names, figures, and references — ready for reports, legal reviews, and client deliverables. No manual reconstruction.

You define what's sensitive

Standard PII categories aren't enough. Define project codes, deal terms, internal IDs, and any business-specific confidential markers — tailored to your organization.

Documents stay readable to AI

Tables, cross-references, and layouts survive the process intact. AI understands full document context — not broken fragments that produce useless outputs.

Use any AI model, anytime

ChatGPT today, Claude tomorrow, on-premise LLM next month. Switch freely — no re-engineering, no vendor lock-in. Protection stays consistent across every model.

Enabling AI adoption across regulated industries where sensitive data was the blocker

LLM Capsule unlocks AI usage on real enterprise documents across financial services, government, healthcare, and legal workflows — turning blocked projects into production deployments.

Enterprise AI enablement through a 3+2 architecture

LLM Capsule enables enterprise AI adoption on sensitive data through a 3+2 data layer architecture: three core enablement pillars plus two additional value capabilities that ensure output quality and model flexibility.

Zero Exposure

Sensitive data is replaced with safe placeholders (encapsulation) inside your environment before anything leaves. Original values never reach external AI services.

Zero exposure means the AI provider processes useful data but cannot reconstruct original sensitive values. Even if the provider logged, stored, or trained on the received data, no original enterprise information would be exposed. Encapsulation creates a data representation that is both processable by AI and opaque to the receiving service — provider logs are safe, raw data never leaves.

AI-Enabled Enterprise Workflows

LLM Capsule plugs into the most common enterprise AI workflows — from document intake to output delivery, one data layer enables AI adoption on real documents.

Secure Document Summarization

AI generates executive summaries of sensitive documents — contracts, reports, filings — while all confidential elements are replaced with safe placeholders. Restored summaries contain real names, dates, and figures ready for business use.

- Contracts, reports, and filings protected

- Real names, dates, and figures restored in output

AI Claims Processing

Insurance and financial claims go through LLM Capsule before AI-powered classification, damage assessment, and fraud detection. Restored outputs feed directly into claims management systems with real policyholder data.

- Classification, damage assessment, fraud detection enabled

- Restored outputs feed directly into claims systems

Confidential Contract Review

AI extracts key terms, obligations, and risk clauses from protected contracts. Restored outputs include real party names, amounts, and clause references — ready for direct integration into deal management systems.

- Key terms, obligations, and risk clauses extracted

- Real party names, amounts, and references restored

Internal Report Generation

AI drafts internal reports from protected data sources — performance reviews, audit findings, compliance summaries. Restored reports contain real employee names, department data, and metric values.

- Performance reviews, audit findings, compliance summaries

- Real employee names, department data, and metrics restored

Enterprise data is never AI-ready by default

Every enterprise document contains sensitive information that cannot be sent to external AI models. But without real data, AI outputs are generic and unusable. This is the core barrier to enterprise AI adoption.

- Organizations cannot leverage AI capabilities without first making their data AI-ready.

- Traditional approaches — masking, redaction, tokenization, and prompt security gateways — were not designed for AI workflows. Masking and redaction permanently remove the data context that AI models need. Prompt gateways filter at the API level but cannot handle enterprise documents end to end.

- These tools create a fundamental adoption barrier: without a data layer that makes sensitive data AI-ready while keeping it protected, enterprise AI projects stall before they can demonstrate value.

| Approach | Method | Limitation | AI Workflow Impact |

|---|---|---|---|

| Masking & Redaction | Permanently removes data | Destroys context AI needs | Unusable [REDACTED] outputs requiring manual reconstruction |

| Prompt Security Gateways | API-level prompt filtering | No document-level protection | No output restoration capability |

| Synthetic Data Platforms | Artificial data generation | Training/testing only | Cannot replace real documents in live AI workflows |

| Security Team Blocks AI | Manual approval gate | Blocks all AI projects | Projects never demonstrate value before being cancelled |

From blocked AI projects to enabled enterprise AI with usable outputs

Enterprise AI is blocked or broken

- AI blocked entirely — security teams reject proposals due to data exposure risk

- Masking and redaction strip context — AI outputs are abstracted and unusable for enterprise workflows

- Manual review workflows persist — documents require human processing because AI cannot be trusted with real data

- Document structure destroyed — flat masking breaks tables, entity relationships, and cross-references

- Low-quality AI output — even when AI is permitted, outputs require extensive manual reconstruction to be usable

- Enterprise AI projects stall in pilot — no path from proof of concept to production deployment

AI adoption enabled on real enterprise data

- AI enabled on sensitive documents — the data layer handles protection so teams can focus on AI outcomes

- Real documents processed with best-in-class LLMs — ChatGPT, Claude, Gemini, Perplexity, or any LLM API

- Compliance satisfied — zero exposure architecture meets enterprise AI governance requirements automatically

- Restored outputs retain original business context — real names, real figures, real references restored locally

- Tables, layouts, cross-references, and document hierarchy fully preserved through structure-preserving processing

- 98% output similarity with zero data exposure — measured on real enterprise document processing workloads

A data layer between your enterprise and any LLM

LLM Capsule sits between your internal systems and external AI models. Raw data stays inside your environment — the trust boundary is never crossed by original data. AI only processes the protected version.

Measured performance on real enterprise document processing workloads

These metrics are measured on enterprise documents with 2,200+ character average length across regulated industry workflows including finance, healthcare, legal, and public sector environments.

Frequently Asked Questions

LLM Capsule acts as an AI enablement data layer that encapsulates sensitive data locally before it leaves the enterprise environment. Only protected representations are sent to AI models. After processing, outputs are restored locally so they remain usable for real enterprise workflows. The original data never reaches external AI services — this is what makes it an AI enablement plugin rather than a monitoring or filtering tool.

See how LLM Capsule enables AI on your enterprise documents

Bring your documents, deployment constraints, and evaluation criteria. We demonstrate how the AI enablement data layer works on your actual data, in your environment, against your compliance requirements.